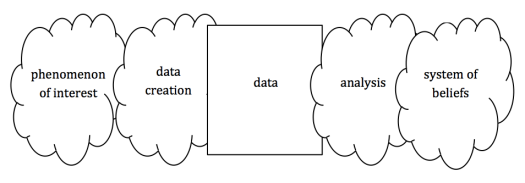

Learning from data is not a simple linear process. The following five parts are all essential and interconnected, with the data itself central but far from the most important component.

If your goal is to achieve a correct system of beliefs about the phenomenon of interest, it is important to attend to all the components of this superficially simple model and work to understand their connections.

Your system of beliefs is involved from the very start. Among the components of Conway’s data science Venn diagram, subject matter expertise is sometimes viewed as unnecessary by those more interested in algorithms than science, but if the goal is learning about something in the world on the basis of data, you have to know something to start with. The absoluteness of this claim might be a little subtle and some people disagree – Hofstadter for one, I think – while others seem to generally be on board.

A simple example: you look at a data set of library visit timestamps and see that there are no visits on Wednesdays:

- Phenomenon of interest: Is the library closed on Wednesdays? Is it open but nobody goes to the library on Wednesdays because of the great deals at Sal’s Diner?

- Data creation: Is there a bug in the logging software that makes it fail on Wednesdays? Is Andy supposed to manually record the data but he goes to Sal’s on Wednesdays? Are the timestamps systematically off by a couple days, so that Wednesday means Sunday?

- Data: The data is taken to exist, but any deterioration to it over time or problems in reading it can be attributed to the creating or analysis components.

- Analysis: Did an earlier stage of analysis drop all Wednesday data for some reason? Did the analysis read in the dates incorrectly, so that Wednesday really means Sunday?

- System of beliefs: Which of the above is most likely? How could you check? Is this Wednesday issue interesting? Is it worth pursuing further? But also: How many visitors are represented by each timestamp? Are there timestamps for both arrivals and exits? And: Is it good or bad if there are a lot of visitors? And of course: How should the analysis be changed? What should we believe?

If you think everything that you see in your data is an accurate signal about the phenomenon you’re interested in, you are likely to be wrong often and in ways you would much rather avoid.

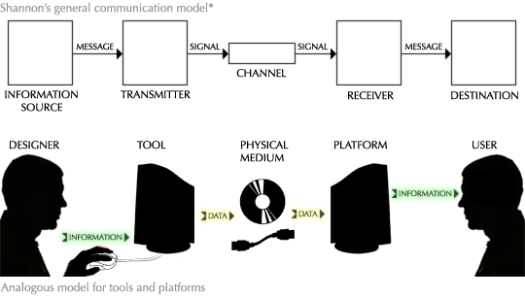

I was thinking about this just before I had the pleasure of reading Victor’s Magic Ink, where I found this similar model, itself “* Adapted from Claude Shannon, A Mathematical Theory of Communication (1948), p2.”:

This is interestingly parallel to the data/analysis model above. A type of difference to articulate is that commonly, for the data analyst, the phenomenon of interest is not trying to communicate. And the “tool” is not necessarily designed for the purpose it finds itself being used for. And of course, the analyst is usually at least to some extent building and being the platform and user on an ongoing and iterative basis.

In all cases, the optimal success of learning on the right hand side hinges on appropriate meta-awareness of the whole process.

Some theory and practice for data cleaning – Plan Space from Outer Nine